|

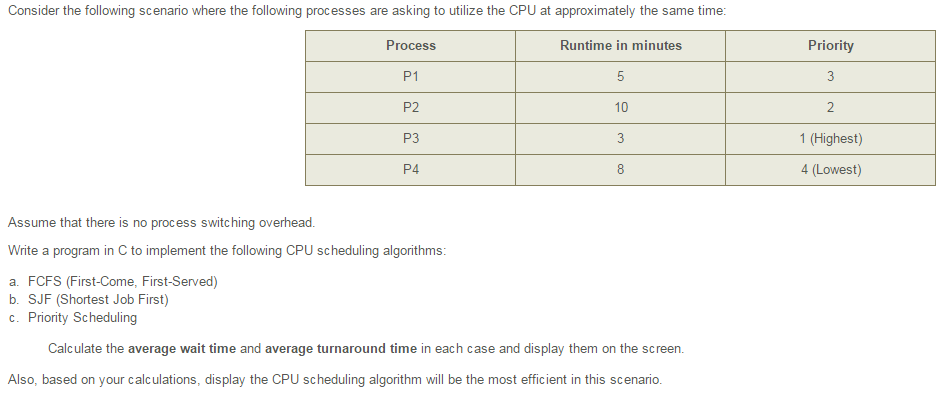

Operating Systems: CPU Scheduling. The following subsections will explain several common scheduling strategies, looking at only a single CPU burst each for a small number of processes. Obviously real systems have to deal with a lot more simultaneous processes executing their CPU- I/O burst cycles. First- Come First- Serve Scheduling, FCFSFCFS is very simple - Just a FIFO queue, like customers waiting in line at the bank or the post office or at a copying machine. Unfortunately, however, FCFS can yield some very long average wait times, particularly if the first process to get there takes a long time. For example, consider the following three processes: Process. Burst Time P1. 24. P2. 3P3. 3In the first Gantt chart below, process P1 arrives first. The average waiting time for the three processes is ( 0 + 2. In the second Gantt chart below, the same three processes have an average wait time of ( 0 + 3 + 6 ) / 3 = 3. In computing, scheduling is the method by which work specified by some means is assigned to resources that complete the work. The work may be virtual computation elements such as threads, processes or data flows, which are in.

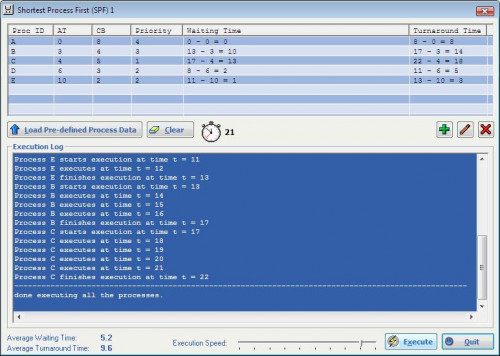

Simulation of following CPU scheduling algorithms: a. SJF (preemptive and non-preemptive) c. Priority Scheduling (preemptive and non-preemptive) d. Round Robin Scheduling CPU Scheduling Algoritms C Program.The total run time for the three bursts is the same, but in the second case two of the three finish much quicker, and the other process is only delayed by a short amount. When one CPU intensive process blocks the CPU, a number of I/O intensive processes can get backed up behind it, leaving the I/O devices idle. When the CPU hog finally relinquishes the CPU, then the I/O processes pass through the CPU quickly, leaving the CPU idle while everyone queues up for I/O, and then the cycle repeats itself when the CPU intensive process gets back to the ready queue. However that does not work for short- term CPU scheduling on an interactive system. Another option would be to statistically measure the run time characteristics of jobs, particularly if the same tasks are run repeatedly and predictably. But once again that really isn't a viable option for short term CPU scheduling in the real world. A more practical approach is to predict the length of the next burst, based on some historical measurement of recent burst times for this process. One simple, fast, and relatively accurate method is the exponential average, which can be defined as follows. If alpha is 1. 0, then past history is ignored, and we assume the next burst will be the same length as the last burst. If alpha is 0. 0, then all measured burst times are ignored, and we just assume a constant burst time. Most commonly alpha is set at 0.

Figure 5. 3: SJF can be either preemptive or non- preemptive. Preemption occurs when a new process arrives in the ready queue that has a predicted burst time shorter than the time remaining in the process whose burst is currently on the CPU. Preemptive SJF is sometimes referred to as shortest remaining time first scheduling. For example, the following Gantt chart is based upon the following data: Process. Arrival Time Burst Time P1. P2. 14. P3. 29p. 43. The average wait time in this case is ( ( 5 - 3 ) + ( 1. Process is a smallest work unit of a program which requires a set of. A Novel CPU Scheduling Algorithm. Priority Based Scheduling. 170 Chapter 6 CPU Scheduling Java thread priority = a Solaris native thread. C - Program to Implement CPU Scheduling Algorithms. CPU Scheduling Algoritms C Program.

This book uses low number for high priorities, with 0 being the highest possible priority. For example, the following Gantt chart is based upon these process burst times and priorities, and yields an average waiting time of 8. Process. Burst Time Priority. P1. 10. 3P2. 11. P3. P4. 15. P5. 52. Priorities can be assigned either internally or externally. Internal priorities are assigned by the OS using criteria such as average burst time, ratio of CPU to I/O activity, system resource use, and other factors available to the kernel. External priorities are assigned by users, based on the importance of the job, fees paid, politics, etc. Priority scheduling can be either preemptive or non- preemptive. Priority scheduling can suffer from a major problem known as indefinite blocking, or starvation, in which a low- priority task can wait forever because there are always some other jobs around that have higher priority. Under this scheme a low- priority job will eventually get its priority raised high enough that it gets run. In the following example the average wait time is 5. Process. Burst Time P1. P2. 3P3. 3The performance of RR is sensitive to the time quantum selected. If the quantum is large enough, then RR reduces to the FCFS algorithm; If it is very small, then each process gets 1/nth of the processor time and share the CPU equally. BUT, a real system invokes overhead for every context switch, and the smaller the time quantum the more context switches there are. Consider, for example the processes shown in Figure 5. In general, turnaround time is minimized if most processes finish their next cpu burst within one time quantum. For example, with three processes of 1. However, if it is made too large, then RR just degenerates to FCFS. A rule of thumb is that 8. CPU bursts should be smaller than the time quantum. Multilevel Queue Scheduling. When processes can be readily categorized, then multiple separate queues can be established, each implementing whatever scheduling algorithm is most appropriate for that type of job, and/or with different parametric adjustments. Scheduling must also be done between queues, that is scheduling one queue to get time relative to other queues. Two common options are strict priority ( no job in a lower priority queue runs until all higher priority queues are empty ) and round- robin ( each queue gets a time slice in turn, possibly of different sizes. Multilevel Feedback- Queue Scheduling. Multilevel feedback queue scheduling is similar to the ordinary multilevel queue scheduling described above, except jobs may be moved from one queue to another for a variety of reasons. If the characteristics of a job change between CPU- intensive and I/O intensive, then it may be appropriate to switch a job from one queue to another. Aging can also be incorporated, so that a job that has waited for a long time can get bumped up into a higher priority queue for a while. Multilevel feedback queue scheduling is the most flexible, because it can be tuned for any situation. But it is also the most complex to implement because of all the adjustable parameters. Some of the parameters which define one of these systems include. The number of queues. The scheduling algorithm for each queue. The methods used to upgrade or demote processes from one queue to another.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

January 2017

Categories |

RSS Feed

RSS Feed